Note: AI is moving fast, so I expect this blog post to be out of date shortly after it is published

Introduction

There is a huge amount of terminology to get used to when working with AI and LLMs for the first time.

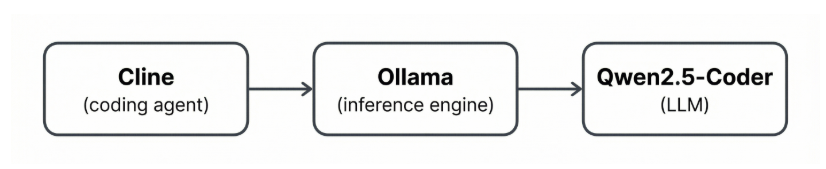

We will look at a simple self-hosted setup using Cline, Ollama and Qwen2.5-Coder and explore the terminology of each step.

This is a simple setup that would allow us to develop using a locally hosted LLM where all of our important data stays on our own machine.

AI Coding Agent

The coding agent is just the interface that we interact with.

There are lots of coding agents available, two popular options if you enjoy VSCode are Cline and Cursor.

- Cline is an extension that brings an AI assistant into your existing Visual Studio Code setup.

- Cursor is a separate editor built on top of VSCode. Cursor offers a more integrated, opinionated AI-first workflow in a dedicated fork.

Inference Engine

An inference engine is what actually runs the model. It accepts prompts, and produces outputs. This is the engine that turns a model file into a usable API.

Ollama is a popular, desktop-friendly inference engine for running open-source LLMs locally. You install it, pull a model (e.g. qwen2.5-coder), and it exposes a local API that tools like Cline can talk to.

There are lots of inference engines to pick from, for example:

- LM Studio

- llama.cpp

- vLLM

Large Language Model (LLM)

The LLM is the actual intelligence. It is the file that contains the patterns of human language and code.

Qwen2.5-Coder is a popular open-source, code-specialised LLM. It is tuned for code generation, and is considered a solid option for a self-hosted coding assistant.

There are lots of open source models to pick from, for example:

- Qwen2.5-Coder (Alibaba)

- Code Llama (Meta)

- DeepSeek Coder (DeepSeek)

- Phi-3 (Microsoft)

There are also lots of proprietary models that work with Cline directly, for example:

- Claude (Anthropic)

- GPT-4o / ChatGPT (OpenAI)

- Gemini (Google)

These three in particular are often referred to as the frontier models. These are the most capable, flagship models from the main commercial labs.

So in this example, we will be self-hosting so have opted for Qwen2.5-Coder.

Summary

During this blog post we have explored the differences between Coding Agent, Inference Engine and LLMs.

This is a very light touch blog post, but I hope you have found it a useful introduction to some new terms.